When working with data, especially in the realm of machine learning, it’s essential to understand how to prepare and manipulate the data to achieve better results.

This process, known as feature engineering, involves transforming, reducing, and selecting features within your dataset to improve the performance of your machine learning models.

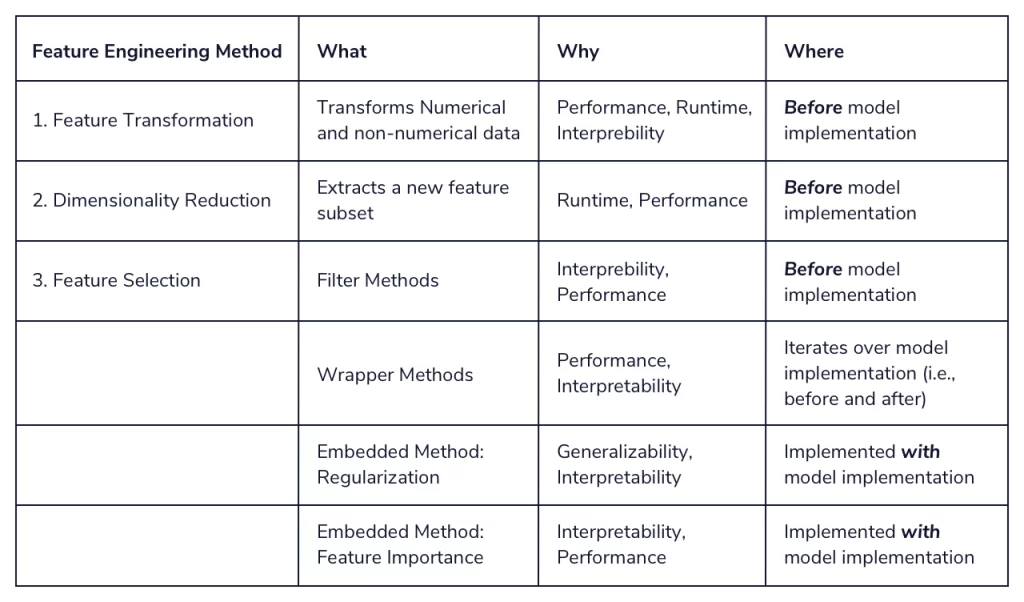

In this article, we’ll break down these three methods, explaining the what, why, and where for each, as well as their pros and cons.

Feature Transformation

What: Feature transformation refers to the process of changing the scale or distribution of your dataset’s features to make them more suitable for analysis. Common techniques include normalization, standardization, and log transformations.

Why: Transforming features helps make the data more consistent and easier to interpret, which can lead to better performance for machine learning algorithms. It can also help remove any biases or distortions that may exist in the raw data.

Where: Feature transformation is commonly used in scenarios where the data contains features with different scales or units, or when the data follows a skewed distribution.

Pros:

- Helps improve the performance of machine learning models.

- Makes the data more consistent and easier to interpret.

- Can remove biases or distortions in the raw data.

Cons:

- Can sometimes lead to a loss of information if not done carefully.

- Requires a good understanding of the data to choose the most appropriate transformation method.

Dimensionality Reduction

What: Dimensionality reduction is the process of reducing the number of features in a dataset while maintaining as much information as possible. Popular techniques include Principal Component Analysis (PCA), t-Distributed Stochastic Neighbor Embedding (t-SNE), and Linear Discriminant Analysis (LDA).

Why: Reducing the dimensionality of a dataset can help combat the “curse of dimensionality,” which occurs when a model struggles to perform well due to the large number of features. Dimensionality reduction can also help improve computational efficiency and make the data easier to visualize.

Where: Dimensionality reduction is often used when dealing with high-dimensional datasets, particularly in image recognition, natural language processing, and genomics.

Pros:

- Helps combat the curse of dimensionality.

- Improves computational efficiency.

- Makes the data easier to visualize.

Cons:

- Can lead to a loss of information if not done carefully.

- Choosing the best dimensionality reduction technique can be challenging.

Feature Selection

What: Feature selection involves identifying and selecting the most relevant features from a dataset for use in a machine learning model. This can be achieved using filter methods, wrapper methods, or embedded methods.

Why: By selecting only the most relevant features, a model can become more accurate and efficient, as well as easier to interpret. Feature selection can also help reduce the risk of overfitting.

Where: Feature selection is commonly used when dealing with datasets that contain a large number of features, particularly in financial analysis, health care, and marketing.

Pros:

- Improves model accuracy and efficiency.

- Reduces the risk of overfitting.

- Makes the model easier to interpret.

Cons:

- Can be computationally expensive, particularly for wrapper methods.

- Requires domain knowledge to identify the most relevant features.

Conclusion

Feature engineering is a crucial step in the process of building effective machine learning models.

By mastering the techniques of feature transformation, dimensionality reduction, and feature selection, you can better prepare your data for analysis, improving the performance of your models and making them easier to interpret.

Each method comes with its pros and cons, so it’s essential to understand when and where to apply them to achieve the best results.

English bloke in Bangkok. First used GPT-3 in 2020 and has generated millions of words with it since. Not really much of an achievement but at least it demonstrates a smidgen of authority. Studies natural language processing, Python and Thai in his spare time.